How to Future-Proof Your Website with Entity-Based SEO, Structured Data, and Agentic Web Readiness for AI

Websites today need to work for search engines, humans, and AI, but a lot of brands are lagging behind. JavaScript-heavy pages and unclear entity signals, for instance, make key content essentially unreadable to AI engines.

For years, website owners prioritized the user experience — not just for human visitors, but also for search engine crawlers.

The human-centric approach worked for a long time. After all, Google kept on rolling out algorithm updates that rewarded websites that provided a great user experience.

Today, that’s no longer enough.

With the introduction of the Google-Agent crawler as well as frameworks like WebMCP, NLWeb, and ACP, websites must also be built for AI.

The problem is, most website owners didn’t prepare for this shift.

While their content is good, the information isn’t clearly structured below the surface. Other sites (i.e., ecommerce stores) also heavily rely on JavaScript to deliver content, which is practically invisible to AI bots.

That’s just the tip of the iceberg.

Understanding the Multi-Layer Web

If you’re struggling to establish a dominant presence in AI tools and features, it’s not your fault.

Historically, website owners only had to worry about search engine crawlers and human users when optimizing content. That’s two layers of the same website, configured and presented differently based on the intended viewer.

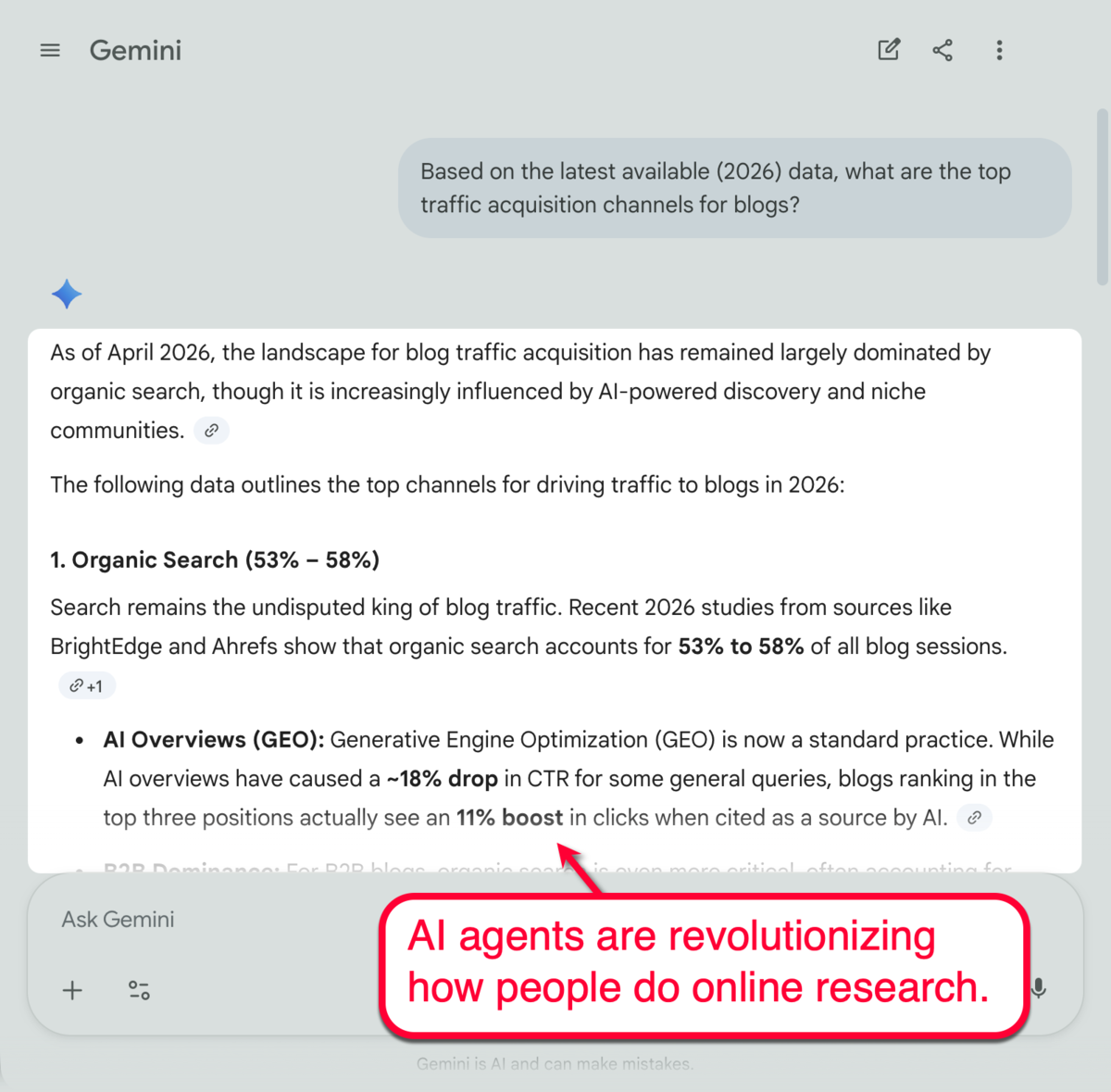

Modern user behavior, however, doesn’t just rely on organic search listings when researching information or interacting with online content.

AI agents, acting on behalf of real users, also explore and interact with online content to automate tasks, like scraping real-time pricing data, requesting a reservation on a booking site, summarizing in-depth articles, and automating analysis on data-rich documents.

This leads to the third layer of the digital landscape — AKA the “agentic web.”

The problem is that most websites aren’t optimized for this layer. And if AI can’t understand or interact with your page, you’re losing out on valuable visibility to traffic that has a much higher probability of converting into customers.

Here’s a closer look at the gaps restricting what AI agents see:

- JavaScript-heavy content: Ecommerce websites, browser-based apps, and dynamic content recommendations use scripts to render information (e.g., product variants, reviews, FAQs, and pricing information). Since AI agents only read raw HTML, any information loaded via JavaScript will be missed, consequently affecting your brand’s visibility on the agentic web.

- Protocol overload: It’s not the first time marketers have had to adapt to emerging protocols, like Semantic Web, Schema.org, and REST APIs. However, it’s the first time they had to deal with an explosion of new (and proposed) standards with no clear prioritization, including WebMCP, UCP, and NLWeb.

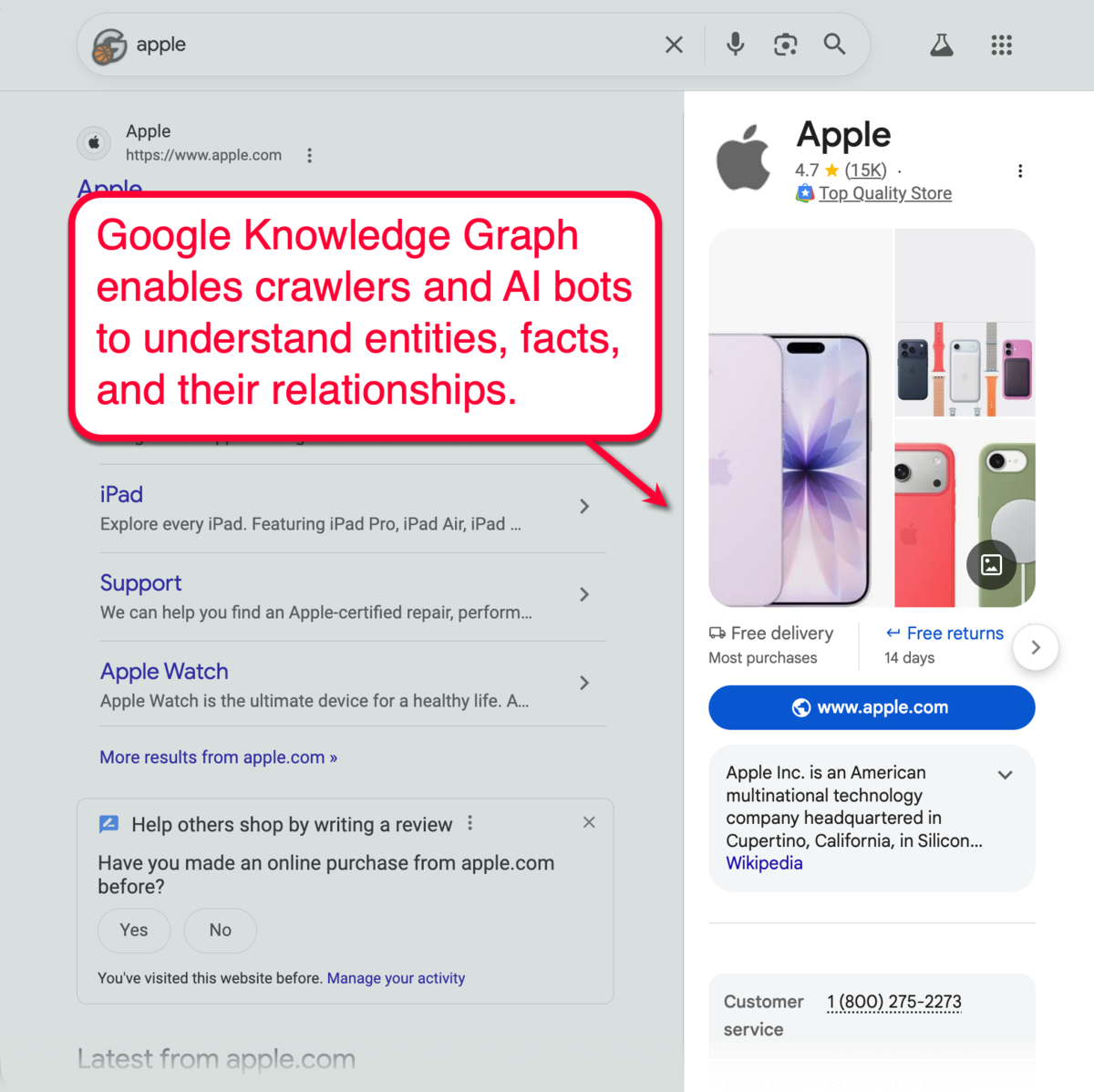

- Entity ambiguity: AI tools rely on Google’s Knowledge Graph to understand billions of entities, including individual facts and defined relationships between these entities (i.e., Apple as the tech company and its relationship with the product “iPhone”). Without optimizing your brand as a distinct entity on its own, you could lose visibility in tools like AI Overviews, ChatGPT, and Perplexity.

It didn’t take long before marketers realized that their sites were partially invisible to AI agents.

This brings us to the strategies most website owners overlook in their SEO.

Optimizing for Agentic Web: Five Crucial Steps for Website Owners

There are five important steps you should complete in order to future-proof your website’s digital visibility:

1. Start with a thorough site audit

You can’t improve what you don’t understand. That’s why I always begin any website optimization campaign with a thorough site audit.

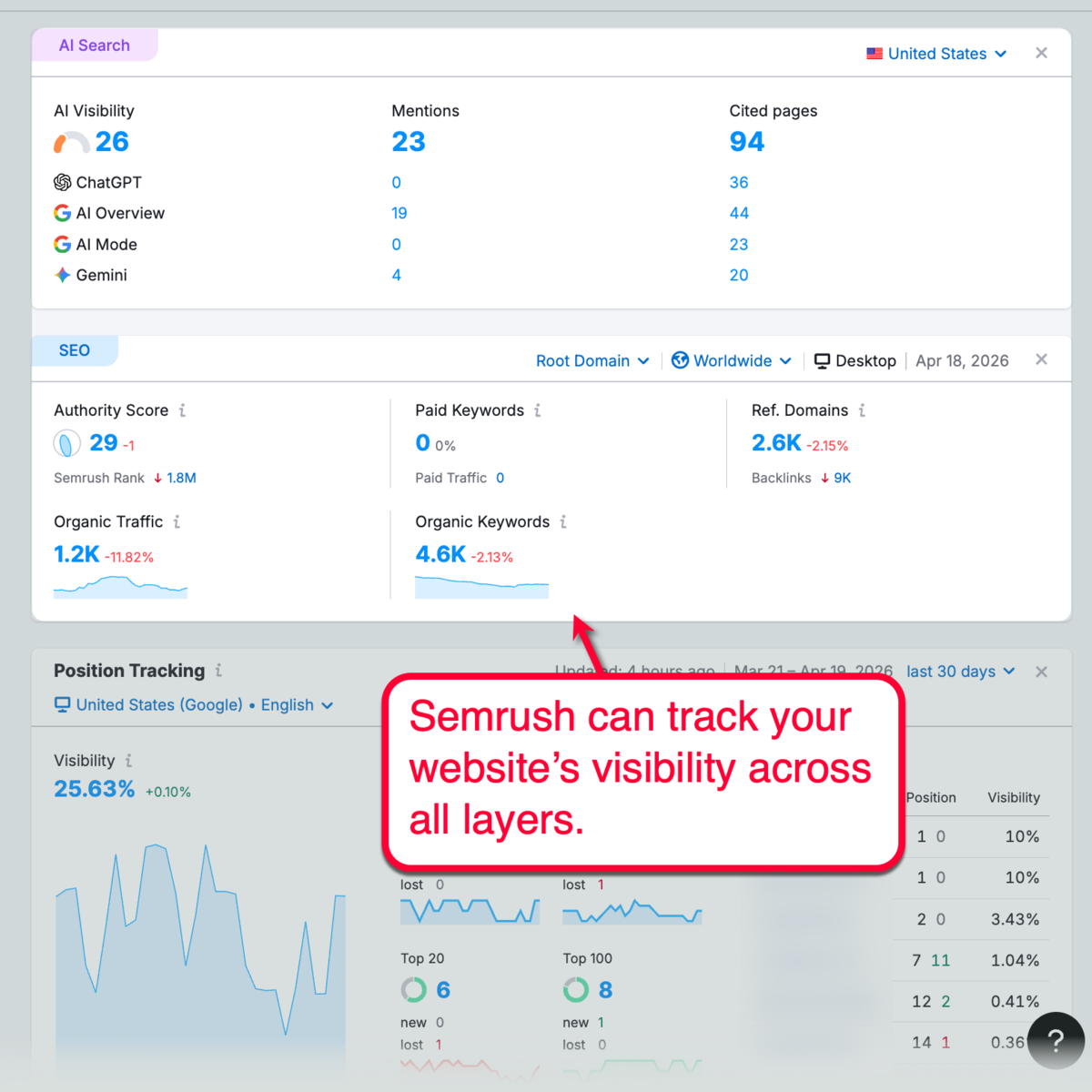

I prefer using Semrush One since it analyzes your website’s presence across all layers.

Remember, investing in a visibility management platform that does it all isn’t just cost-effective. It also helps with planning cohesive, multi-channel strategies while ensuring consistent reporting data.

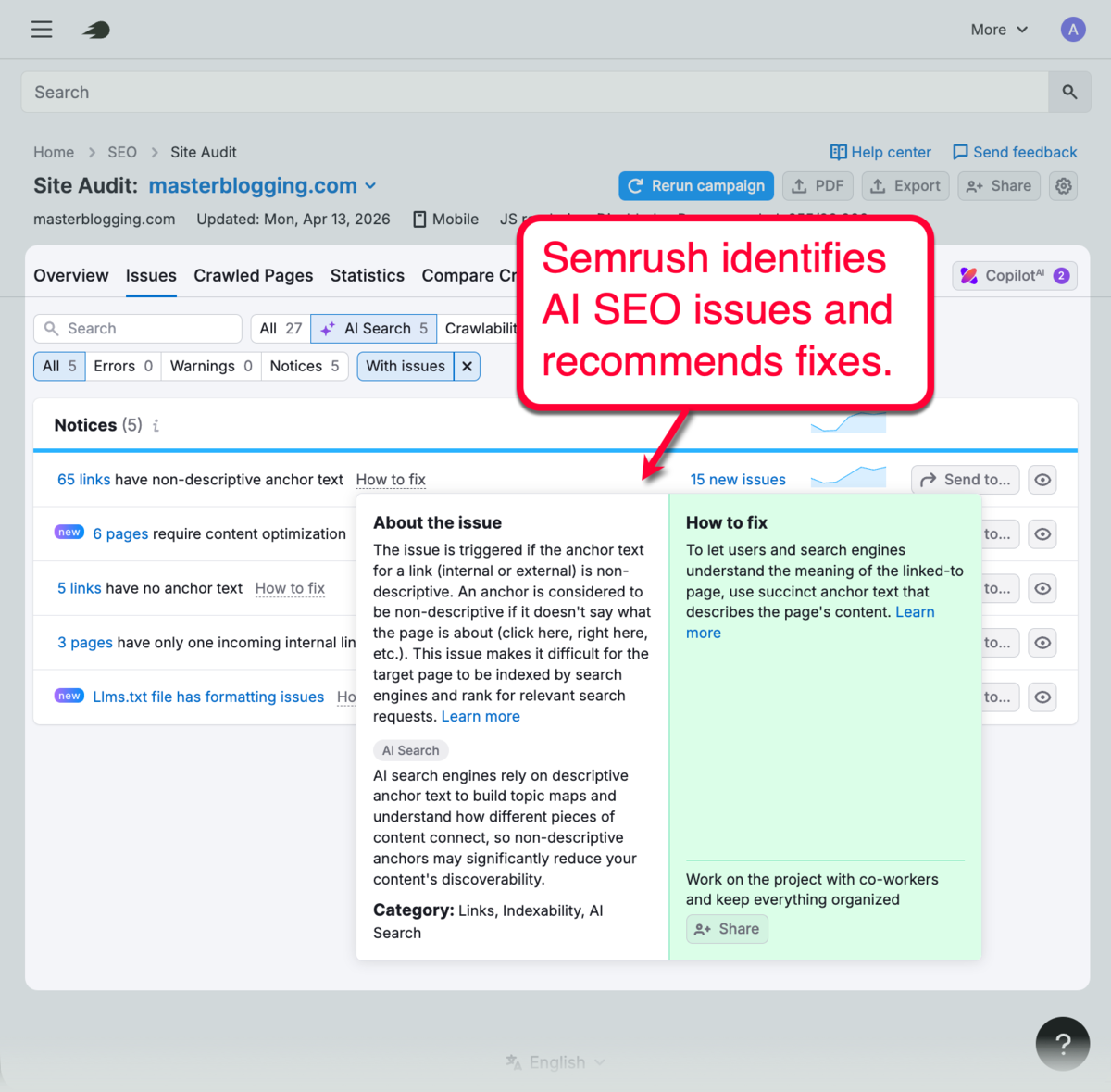

Semrush also quickly flags issues that negatively affect your AI SEO, including missing structured data, broken schema markup, and AI crawling issues. More importantly, the audit report also includes actionable recommendations or fixes you can follow.

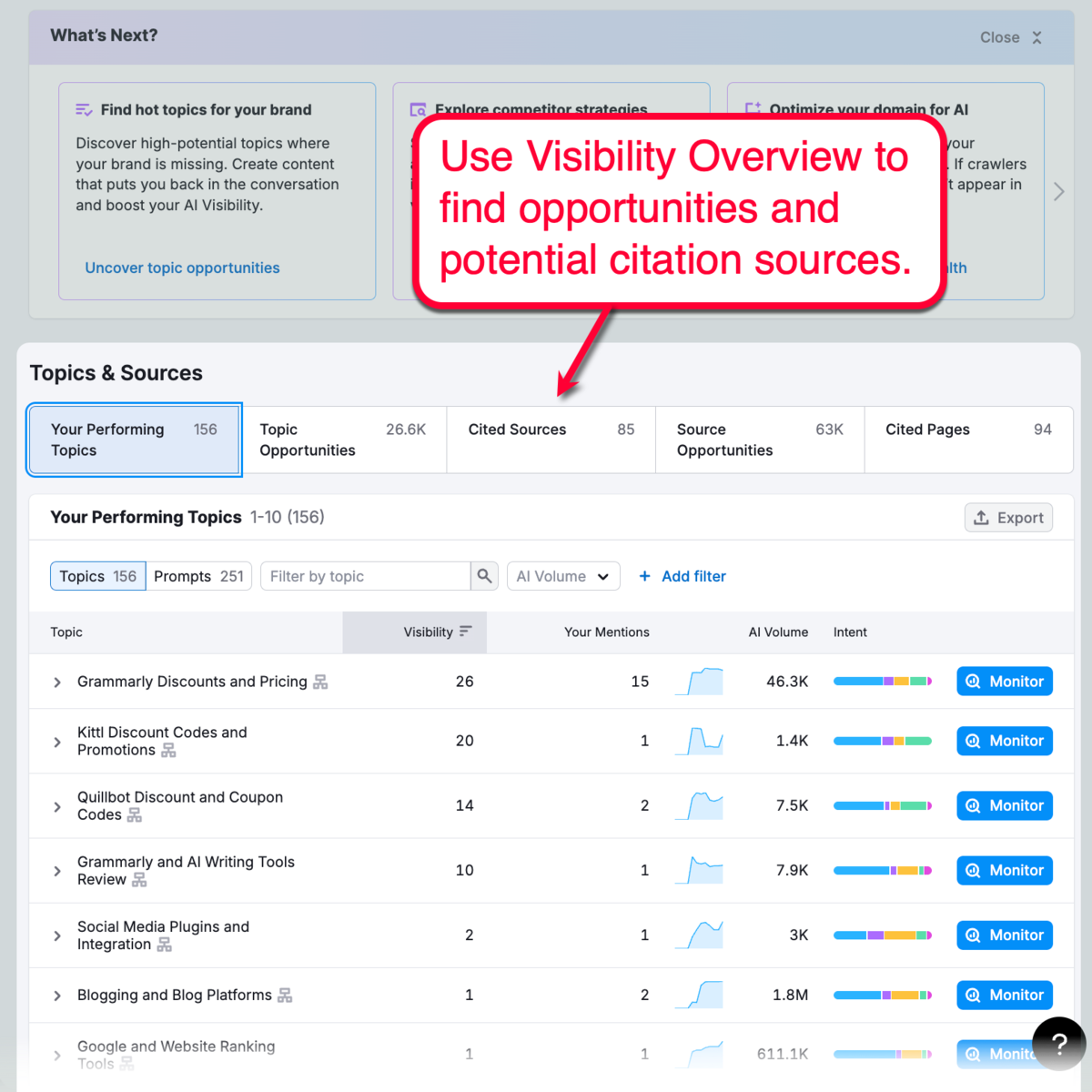

A site audit will also reveal topics or queries that already lead to your brand mentions in AI conversations.

With Semrush, you’ll find these details in the Visibility Overview report. I use it to uncover more topic opportunities, competitor mentions, and valuable sources or websites that positively affect your AI presence.

2. Define your core entities (not just keywords)

If you want to excel on the agentic web, it’s important to get your core entities straight.

The first step is to create a list of your primary brand elements or entities you’ll use to establish your digital footprints.

Refer to the table below:

| Entity Type | Category | Schema Example |

| Organization | Brand/Business | “@type”: “Organization”, “name”: “Master Blogging”, |

| Person | Author/Founder/Expert | “@type”: “Person”, “name”: “Ankit Singla”, |

| Product | Offering/Solution | “@type”: “Product”, “name”: “Semrush”, |

| Service | Offering | “@type”: “Service”, “serviceType”: “SEO and Content Writing”, |

| Place/Location | Local SEO entity | “@type”: “Place”, “name”: “MB Headquarters”, |

Remember, set up your entities consistently across sources.

Put the markup in your homepage or a dedicated page (i.e., “About Us”). No need to spread your primary entity definitions across your entire website — just be sure they’re embedded directly in the HTML to make them visible to AI crawlers.

Finally, validate your markup using Rich Results Test or the Schema Validator tool, and make sure you cross-reference entities with the “sameAs” property.

Read this guide for more information on structured data and setting up your entities.

3. Analyze audience sentiment

Optimizing for AI agents isn’t just about getting mentions.

It also involves making sure that, when you do get mentions, your brand is covered in a positive way.

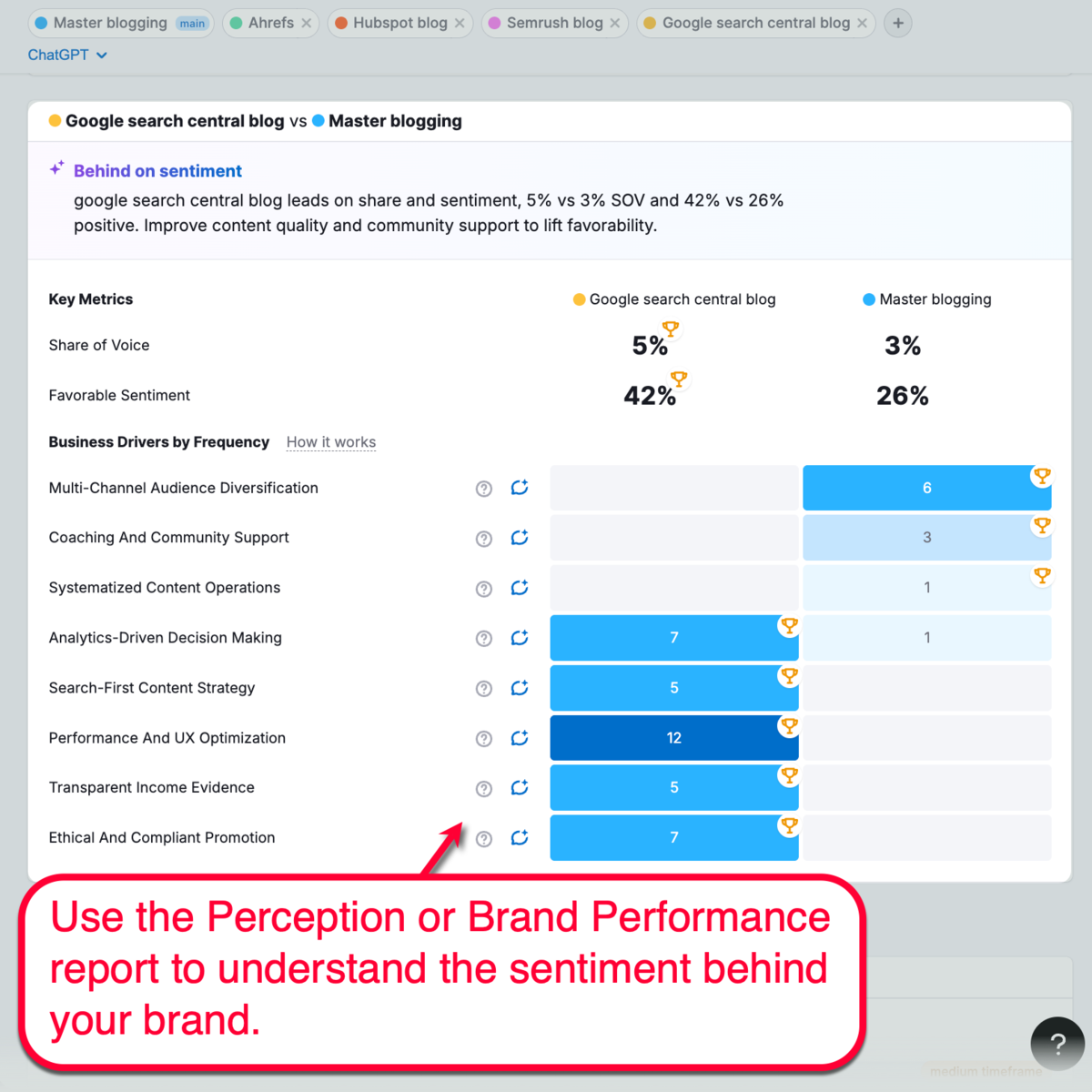

There are several tools that analyze how your brand is mentioned in AI-led conversations. For me, I use Semrush’s “Perception” or “Brand Performance” tool, which provides a bird’s-eye view of the user sentiment behind my brand, including benchmarks against competitors.

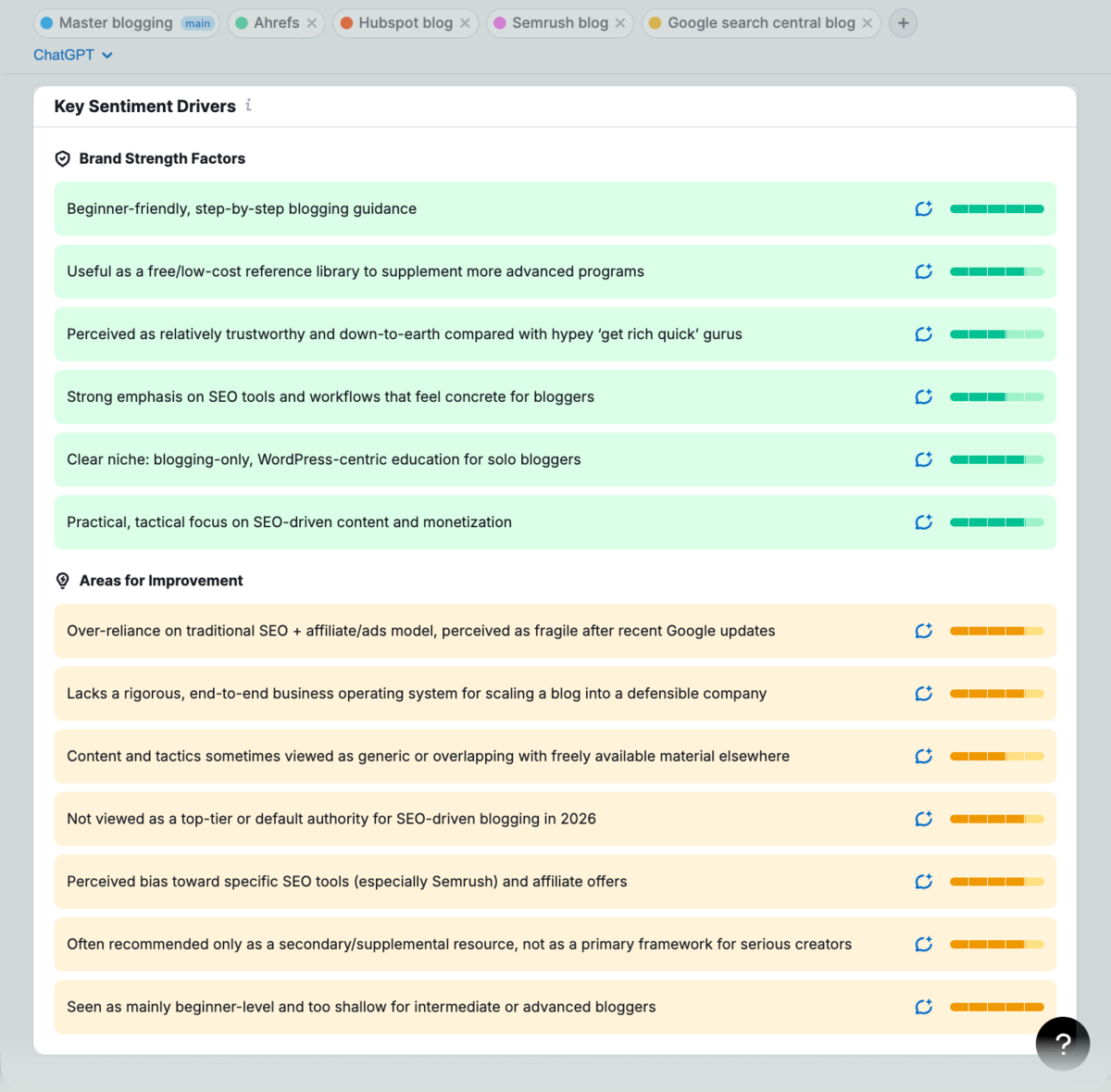

The best part about the Perception tool is the “Key Sentiment Drivers” section, which also reveals specific areas for improvement that will elevate audience sentiment.

4. Update your keyword strategy

In the agentic web, remember that crawlers and bots understand context — not just keywords.

Rather than fixating on search volume or keyword difficulty, update your keyword strategy to priority entity-based relevance. Group related keywords into topic clusters, relationships, and audience intent.

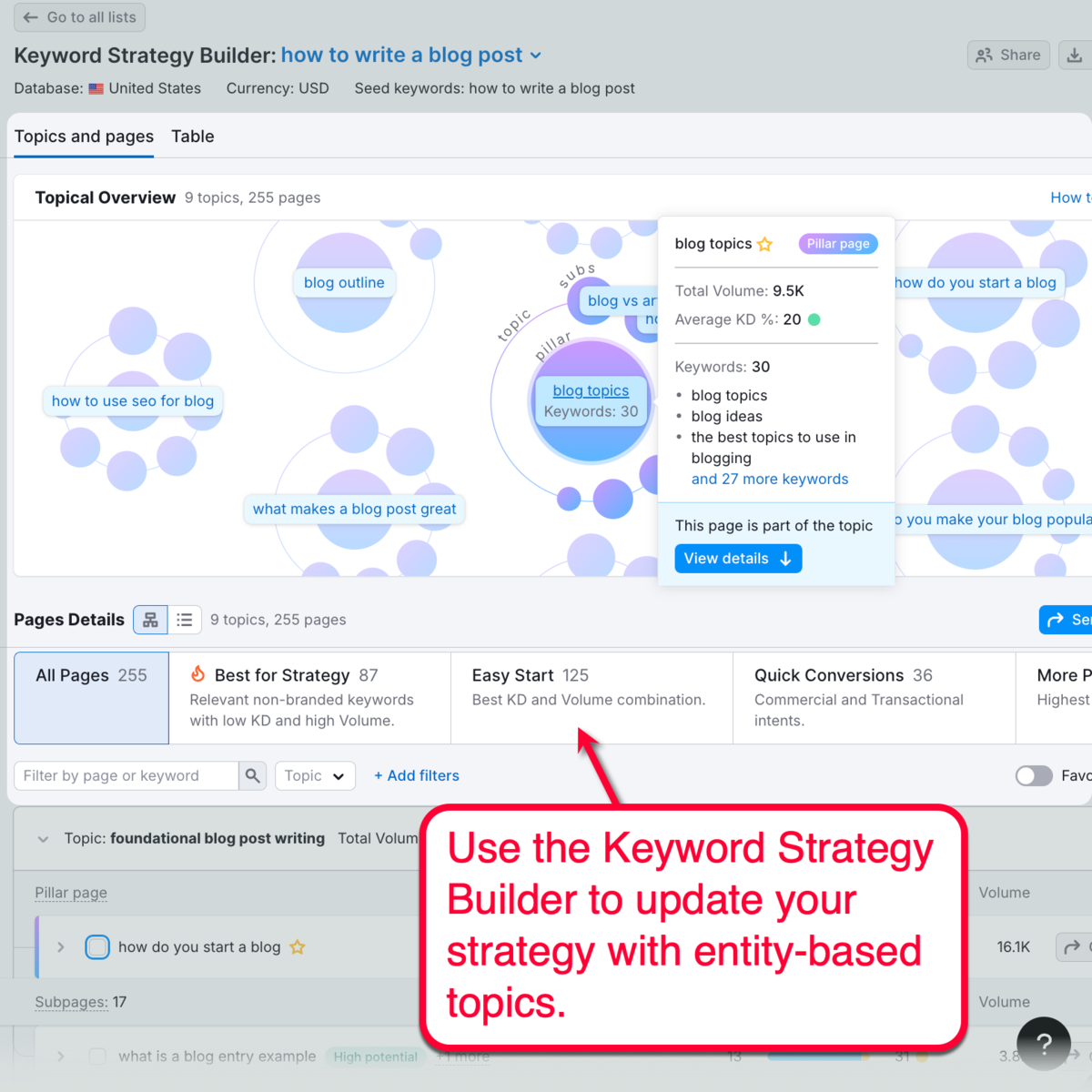

I like to use Semrush’s Keyword Strategy Builder for this purpose. It creates a map of semantic topic clusters, generates ideas for subpages, and provides target keywords.

Here’s a tip: Research topic clusters around your core entities.

Also, don’t write off zero-volume keywords and high-intent phrases. While they don’t offer huge traffic gains, they fuel AI recommendations by signaling topical expertise while answering deep, nuanced queries that AI agents and real people care about.

5. Consider Server-Side Rendering (SSR)

Utilizing structured data is a step towards helping AI agents understand the context behind your content, including JavaScript-based information.

Consider taking it a step further with Server-Side Rendering (SSR), which renders all the information on a page (including dynamic content) before sending it to the user’s browser. As such, Google-Agent and other AI bots can view and explore your complete page without being hindered by client-side scripts.

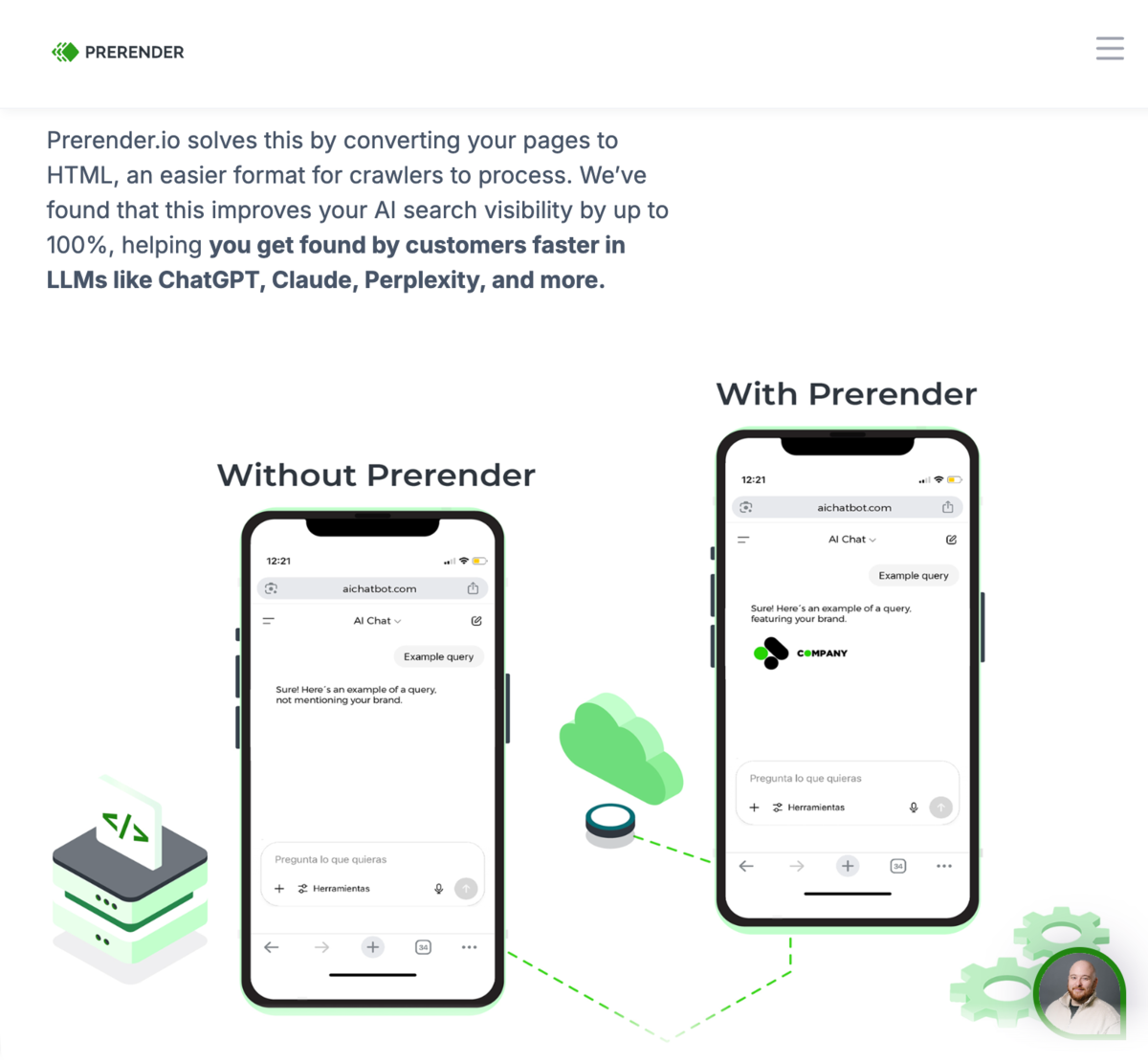

For sites that use JavaScript frameworks, SSR can be implemented via tools like Next.js, SvelteKit, or Nuxt.js. Smaller or static stats, on the other hand, can use pre-rendering tools like Rendertron or Prerender.io, which generate HTML snapshots for both AI and search crawlers.

Final Words

Modern online marketing isn’t just about quality content, great user experience, and SEO keywords anymore.

It’s about optimizing your site for the multi-layer web — beyond search and into smart, agentic AI systems.

Running a site audit, defining your core entities, addressing the JavaScript issue, and updating your strategy are the first steps to future-proofing your website. At this point, it’s all about running your strategy, monitoring your results, and identifying improvement opportunities from there.

Article by

Ankit Singla is the founder of Master Blogging. With over 15 years of blogging experience, he helps entrepreneurs build blogs that become long-term business assets through content, SEO, and authority.